After an emergency at my previous webhost, I've switched to a new webhost. If you see any missing content, let me know!

Dude, Where's my Command?

The Dilemma

This post was inspired by a recent occurrence at work. I have built a framework which constructs documents based on a lists of functions in a module specific to that kind of document.

I found myself running into an issue where even though I knew there was a command named a certain thing, and that the function was correctly exported from the module, PowerShell wasn't finding the command.

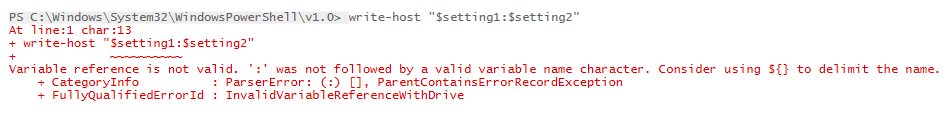

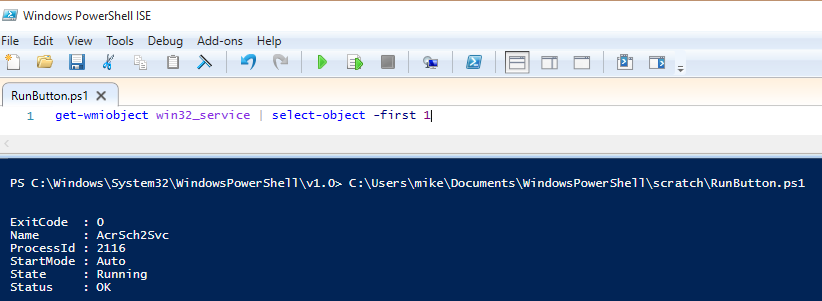

My code looked something like this:

$module='Module1'

Git - The 5 Percent that I Always Use

One of the reasons I got into IT was that I really enjoy learning new things. Unfortunately, there are so many things to learn that it's easy to get overwhelmed. Does it make sense to do a deep-dive into each technology that you use, or does it sometimes make sense to skim the cream off the top and move on to the next technology?

One of the reasons I got into IT was that I really enjoy learning new things. Unfortunately, there are so many things to learn that it's easy to get overwhelmed. Does it make sense to do a deep-dive into each technology that you use, or does it sometimes make sense to skim the cream off the top and move on to the next technology?

In this post, I'm talking about git, the distributed source control system that is used in GitHub, Azure DevOps Repos, GitLab, and countless other places. Git can be really complicated, and intimidating, so I'm going to try to convey the tiny fragment of git which allows me to get my work done.

Just Eight Commands

Here are the commands I regularly use:

- git clone

- git checkout

- git add

- git commit

- git push

- git pull

- git branch

- (occasionally) git stash

That's it. Eight commands.

Here's how I do things. If you're a git guru and see ways to improve this simple workflow, let me know. Also, know that I'm usually working on projects by myself, but I try to work like I would on a team.

Starting Off

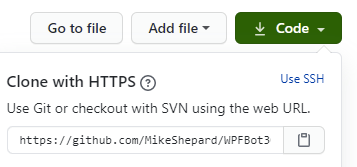

To start, I clone the repository that I'm going to be working on. For instance, if I wanted to work on WPFBot, I'd go to the github repo for it and get the URL:

With that, I can issue the command:

git clone https://github.com/MikeShepard/WPFBot3000

and git will copy the remote repository into a local directory called WPFBot3000 ready for me to edit.

If I'm starting a new project, I would create a repo (Github, AzureDevops, etc.) and clone it locally.

I could create it locally and push it up, but this way I only have one method to remember.

Preparing for changes

Before I make any changes, I need to make sure I'm on a branch dedicated to my changes.

If this is a new set of changes I'm working on, I can create the branch and switch to it with the command:

git checkout -b BranchName

(where BranchName is a descriptive label for the changes I'm making.

If the branch already exists,

git checkout BranchName

will switch me to the existing branch.

Making Changes

Making changes is easy. I just make the changes. I can edit files, move things around, delete files, create folders, etc.

As long as the changes are in the repo (the WPFBot3000 folder, in this case), git will find them.

There are no git commands necessary for this to happen.

Staging the Changes

I tell git that I've made changes that I like using the command

git add .

This says to "stage" any changes that I've made anywhere in the repo.

Staging changes isn't "final" in any way. It just says that these changes are ones I'm interested in.

As before, I could use sophisticated wildcards and parameters to pick what to stage, but by working on short-lived branches I almost always want to stage everything I've changed.

Committing the Changes

Committing the changes is like setting a milestone. This is a point-in-time where the state of these files is "interesting".

I do this using the command: git commit -m 'A descriptive message about what changes I made'

A few things to note about commits:

- Interesting doesn't always mean finished. I can commit several times without pushing the code anywhere.

- "descriptive" is subjective. There has been a lot written about good commit messages. Mine probably are bad.

Repeat the Cycle

If I'm not done with the changes, I can continue the last 3 steps:

- Making Changes

- Staging the Changes

- Committing the Changes

All of these steps are happening locally, but by committing often I have chances to roll-back to different places in time if I feel like it (I rarely do).

Completing the Changes

The next step is a bit of a cheat.

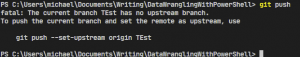

I hate remembering long git commands, so rather than remembering how to link my local branch with the remote repo, I simply say

git push

Git will reply with an error message because it doesn't know what remote branch to push it to.

In the error message, though, it tells me how to do what I want:

In this case, it's

git push --set upstream origin TEst (and yes, branch names are case-sensitive)

Pull Request

At this point, I go to Azure DevOps or GitHub (I don't use GitLab much) and browse to the repo.

It will usually have a notice that a branch was just changed and will give me the opportunity to create a pull request.

A pull request is a request to merge (or pull) changes from one branch into another.

Typically, you're merging (or pulling) changes from your branch into master.

Once you've created the pull request, this is the time for your team members to review the changes, make suggestions, and approve or deny the request.

If you're working alone, you can approve the request yourself.

I also select the "delete the branch after merge" option so I don't have a bunch of old branches hanging around.

If the merge is successful, then the changes are now part of the master branch on the remote repo.

They are only in my feature branch locally, though.

Pulling Changes Down

To get the updated master branch, I have to first switch to it:

git checkout master

Once I'm on the branch, I can

git pull

to pull the changes down.

Cleaning up and Starting over

Now that our changes are in master, I can remove the local branch with:

git branch -d BranchName

At this point, I don't usually know what I'm going to work on next.

I have a bad habit of accidentally making changes in master locally, though so my next step helps me avoid that.

I simply check out a new branch called Next like this:

git checkout -b Next

Note: This is exactly what we did at the beginning, just with a temporary branch name of Next.

When I'm ready to work on a real set of changes, I can rename the branch with

git branch -m NewBranchName

Summary

That probably seemed like a lot, but here are all of the commands (with some obvious placeholders)

- git clone <url>

- git checkout -b BranchName

- git add .

- git commit -m 'Fantastic commit message'

- <repeat add/commit until ready to push>

- git push (followed by copy/paste from the error message)

- <proceed to create and complete the pull request>

- git checkout master

- git pull

- git branch -d BranchName

- git checkout -b Next

Bonus command - git stash

If I find I've made changes in master, git stash will shelve the changes. I can then get on the right branch and do a git stash pop to get the changes back.

With the adoption of the git checkout -b Next protocol, though, I don't find myself needing this very often.

What do you think? Does this help you get your head around using git?

Very Verbose PowerShell Output

Have you ever been writing a PowerShell script and wanted verbose output to show up no matter what?

You may have thought of doing something like this:

#Save the preference variable so you can put it back later

A PowerShell Parameter Puzzler

I ran across an interesting PowerShell behavior today thanks to a coworker (hi Matt!).

It involved a function with just a few arguments. When I looked at the syntax something looked off.

I am not going to recreate the exact scenario I found (because it involved a cmdlet written in C#), but I was able to recreate a similar issue using advanced functions.

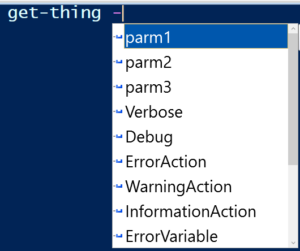

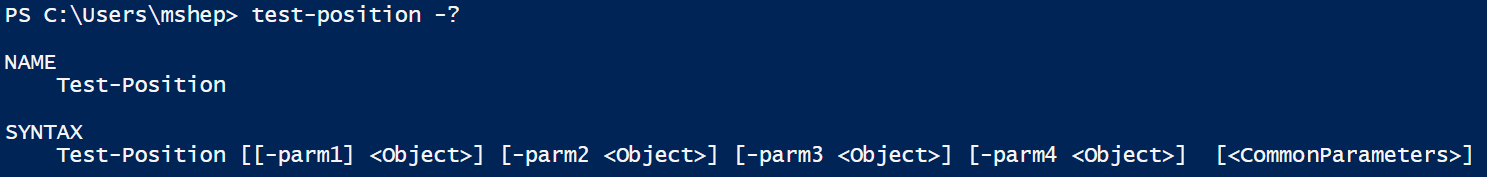

So consider that you are entering a command and you see an intellisense prompt like this one:

You would rightfully assume that it had 3 parameters.

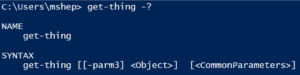

If you looked at the syntax using Get-Help (or the -? switch) you would see something strange:

Wait...where did the other 2 parameters go?

Using Get-Command get-Thing -syntax shows essentially the same result:

![]()

This was the kind of problem I ran into. I was given a cmdlet, and when I looked at the syntax to see the parameters, I didn't find the parameters I expected. They were there, but they weren't shown by Get-Command.

The "puzzle" is a bit less confusing when you see the code of the function:

function get-thing {

The PowerShell Conference Book

Back in May, Mike Robbins (@mikefrobbins) asked if I wanted to contribute a chapter to a book he was putting together. The book would include chapters from different scripters in the PowerShell community and each would provide material that would be similar to a session at a conference.

In addition, the proceeds from the sale of the book would support the DevOpsCollective OnRamp scholarship for new IT pros going to the PowerShell and DevOps Conference in 2019.

Sounded like fun, so I signed on. We did the writing in markdown in a private GitHub repo. Not at all what I'm used to for writing but it was a really good experience. Mike was joined by Mike Lombardi (@barbariankb, from the STLPSUG) and Jeff Hicks (@jeffhicks). They did a great job corralling over 30 writers and at this point, the book is at 90% complete.

If you haven't heard about this, go over to leanpub and take a look. The table of contents alone should convince you that this book is worth your time.

My chapter, Rethinking PowerShell GUIs, went live last friday (8/3) and talks about the beginnings of WPFBot3000 and a "companion" module called ContextSensitiveMenus which I haven't blogged about yet.

I would enjoy hearing feedback on my chapter and the book in general.

--Mike

PowerShell DSL Module Considerations

Just a quick note to mention a couple of things I've come across with PowerShell modules that encapsulate DSLs (Domain Specific Languages, specifically WPFBot3000).

PowerShell Command Name Warnings

PowerShell modules have always issued warnings if they contain commands that don't use approved verbs. What's fun with modules for DSLs is that the commands in general don't use verbs at all. Since these commands aren't "proper", you might expect a warning. You won't get one, though.

PowerShell Module Autoloading

PowerShell modules have had autoloading since v3.0. Simply put, if you use a cmdlet that isn't present in your session PowerShell will look in all of the modules in the PSModulePath and try to find the cmdlet somewhere. If it finds it, PowerShell imports the module quietly behind the scenes.

This doesn't work out of the box with DSLs. The reason is simple.

DSLs generally have commands that aren't in the verb-noun form that PowerShell is expecting for a cmdlet, so it doesn't try to look for the command at all.

The fix for this is simple (for WPFBot3000, at least). All I've done is replace the two top-level commands (Window and Dialog) with well-formed cmdlet names (New-WPFBotWindow and Invoke-WPFBotDialog). Then, I create aliases (Window and Dialog) pointing to these commands.

Now that I think of it, I'm not sure why that works. If PowerShell is looking for the aliases, why wasn't it finding the commands? Nevertheless, it works.

That's all for today, just a couple of oddities.

--Mike

WPFBot3000 - Approaching 1.0

I just pushed version 0.9.18 of WPFBot3000 to the PowerShell Gallery and would love to get feedback. I've been poking and prodding, refactoring and shuffling for the last month or so.

In that time I've added the following:

- Attached Properties (like Grid.Row or Dockpanel.Top)

- DockPanels

- A separate "DataEntryGrid" to help with complex layout

- A ton of other controls (DataGrid and ListView are examples)

- A really exciting BYOC (bring your own controls) set of functions

Mostly, though, I've tried to focus on one thing: reducing the code needed to build a UI.

To that end, here are a few more additions:

- Variables for all named controls (no more need to GetControlByName()

- -ShowForValue switch on Window which makes it work similarly to Dialog

In case you haven't looked at this before, here's the easy demo:

$output=Dialog {

Introducing WPFBot3000

Preamble

After 2 "intro" posts about writing a DSL for WPF, I decided to just bit the bullet and publish the project. It has been bubbling around in my head (and in github) for over 6 months and rather than give it out in installments I decided that I would rather just have it in front of a bunch of people. You can find WPFBot3000 here.

Before the code, a few remarks

A few things I need to say before I get to showing example code. First, naming is hard. The repo for this has been called "WPF_DSL" until about 2 hours ago. I decided on the way home from work that it needed a better name. Since it was similar in form to my other DSL project (VisioBot3000), the name should have been obvious to me a long time ago.

Second, the main reasons I wrote this are:

- As an example of a DSL in PowerShell

- To allow for easier creation of WPF windows

- Because I'm really not that good at WPF

In light of that last point, if you're looking at the code in the repo and you see something particularly horrible, please enter an issue (or even better a pull request with a fix). As far as the first two go, you can be the judge after you've seen some examples.

Installing WPFBot3000

WPFBot3000 can be found in the PowerShell Gallery, so if you want to install it for all users you can do this:

#in an elevated session

A PowerShell WPF DSL (Part 2) - Getting Control Values

Some Background

Before I start, I should probably take a minute to explain what a DSL is and why you would want to use one.

A DSL (domain-specific language) is a (usually small) language where the vocabulary comes from a specific problem domain (or subject). Note that this has nothing to do with Active Directory domains, so that might have been confusing.

By using words that are naturally used in describing problems in this subject, it is possible to write using the DSL in ways that look less like programming and more like describing the solution.

For instance, in Pester, you might write part of a unit test like this (3.0 syntax):

It "Has a non-negative result" {

Starting a PowerShell DSL for WPF Apps

The problems

There is always a problem. In my case, I had two problems.

First, when I teach PowerShell, I mention that it's a nice language for writing DSLs (domain-specific languages). If you want an in-depth look, Kevin Marquette has a great series on writing DSLs. I highly recommend reading it (and everything else he's written). I'm going to cover some of the same material he did, but differently.

Anyway, back to the story. When I mention DSLs, I generally get a lot of blank stares. Then, I get to try to explain them, but I don't have a great example (Pester, Psake, and DSC are a bit advanced). So I was looking for a DSL I could write that would be easy to explain, with the code and output straight-forward. That's the first problem.

The second problem, again from teaching, is when I talk about writing GUIs. This is always a popular topic, and it's a lot of fun to discuss the different options. I get asked about when it is a good idea to write a UI in PowerShell vs. when it would make more sense to do it in a managed language. My answer is something along the lines of "If it's something simple like a data-entry form, then PowerShell is a great fit. If it has much complexity you are probably going to want to use C#." I got thinking after teaching last November that writing a data-entry form in PowerShell really isn't that easy.

PowerShell to the rescue!

I decided that I needed to remedy the situation. Writing a data-entry form (where we're not super concerned about the look-and-feel) should be trivial.

My first thought was that I should be able to write something like this:

Window {

Celebrating 1 Year of Southwest Missouri PowerShell User Group (SWMOPSUG)

So...we've been meeting in Springfield, MO for a year now.

Our first meeting was in June 2017 and had our "anniversary" meeting earlier this month. Thanks to Scott for presenting a talk about PowerShell jobs!

We haven't had big crowds, but we have had good consistent attendance. Looking forward to another year and reaching out to more people in the community.

If you're in the southwest Missouri area (or close by), let me know and I'll be happy to see where we can get you scheduled to speak.

If you're interested, you can find details about upcoming events on our meetup page.

--Mike

A Modest Proposal about PowerShell Strings

If you've used the PowerShell Script Analyzer before you are probably aware that you shouldn't be using double-quoted strings if there aren't any escape characters, variables, or subexpressions in them. The analyzer will flag unnecessary double-quotes as problems. That is because double-quoted strings are essentially expressions that PowerShell needs to evaluate.

Let me repeat that...

Double-quoted strings are expressions that PowerShell needs to evaluate.

Single-quoted strings, on the other hand, are just strings. There's nothing (aside from doubled single-quotes being replaced by a single single-quote) that needs to be done with them.

When I teach about PowerShell, I usually say something along the lines of "double quotes are an indication to PowerShell that there's some work to do here". I was thinking about this the other day and I think a shift in terminology will be helpful. Calling them double- and single-quoted strings is descriptive, but not very helpful.

My proposal is simply this:

Single-quoted strings will henceforth be called "strings".

Double-quoted strings will henceforth be called "string expressions".

"String expression" gives me the idea that it is going to be evaluated. Which fits double-quoted strings perfectly. It's also shorter than saying "double-quoted string", which is a bonus.

"String" sounds (to me, at least), like something static. Matches the situation again.

For those that think "why not just use double-quoted strings all the time?", I would counter: Would you use Resolve-Path against C:\? Probably not, because it would be a waste of time. There's nothing to do. Resolve-Path expands wildcards and there are no wildcards there. I guess you could use Resolve-Path with every path just to be safe...but you get the point.

What do you think of this proposal? Do "string" and "string expression" convey enough that they should be used? Let me know in the comments.

FWIW, I'm going to be using them whether you agree. :-)

--Mike

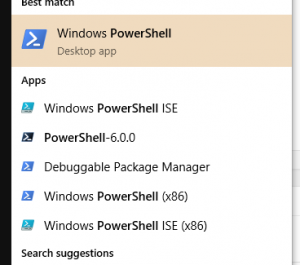

Get-Learning - Launching Powershell

I thought I'd take a few minutes and show several ways to launch PowerShell. I'll start with the basics and maybe by the end there will be something you haven't seen

before.

The Start Menu

One of the first places to look for PowerShell is in the Start Menu. Opening the start menu and typing "PowerShell" will get you something like this:

Note that there are several options

- Windows PowerShell (64-bit console)

- Windows PowerShell ISE (64-bit ISE)

- PowerShell-6.0.0 (PowerShell Core...you might not have this)

- Windows PowerShell (x86) (32-bit console)

- Windows PowerShell ISE (x86) (32-bit ISE)

There's also a "debuggable package manager", which is a Visual Studio 2017 tool (and essentially the 32-bit console).

For each of these, you can click on it to launch, but there are other options as well:

- Click to run a standard PowerShell session

- Right-Click and choose "Run As Administrator" to run an elevated session (if you are a local administrator)

You'll also notice that the right-click menu has options to run the other versions (ISE/Console, 32/64-bit).

The Run dialog

From the Run dialog (Windows-R), you can type PowerShell or PowerShell_ISE to launch the 64-bit versions of these tools.

What you may not know (and I just learned this recently, thanks Scott) is that if you hit ctrl-shift-enter, instead of just hitting enter, it will run them elevated (as administrator).

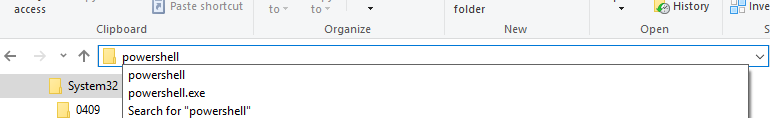

Windows Explorer

The final place I'm going to mention is Windows Explorer. If you have it open, you can launch PowerShell or the ISE in the current directory by typing PowerShell or PowerShell_ISE in the address bar like this:

Can you think of other ways to launch PowerShell (other than from PowerShell...that would be cheating)? Let me know in the comments.

--Mike

Old School PowerShell Expressions vs New

In a recent StackOverflow answer, I wrote the following PowerShell to find parameters that were in a given parameter set (edited somewhat for purposes of this post):

$commandName='Get-ChildItem'

Getting Data From the Middle of a PowerShell Pipeline

Pipeline Output

If you've used PowerShell for very long, you know how to get values of of a pipeline.

$values= a | b | c

Nothing too difficult there.

Where things get interesting is if you want to get data from the middle of the pipeline. In this post I'll give you some options (some better than others) and we'll look briefly at the performance of each.

Method #1

First, there's the lovely and often overlooked Tee-Object cmdlet. You can pass the name of a variable (i.e. without the $) to the -Variable parameter and the valu

es coming into the cmdlet will be written to the variable.

For instance:

Get-ChildItem c:\ -Recurse |

PowerShell Reflection-Lite

N.B. This is just a quick note to relate something I ran into in the last couple of weeks. Not an in-depth discussion of reflection.

N.B. This is just a quick note to relate something I ran into in the last couple of weeks. Not an in-depth discussion of reflection.

Reflection

Reflection is an interesting meta-programming tool. Using it, we can find (among other things) a constructor or method that matches whatever criteria we want including name, # of parameters, types of parameters, public/private, etc. As you can imagine, using reflection can be a chore.

I have never had to use reflection in PowerShell. Usually, `Get-Member` is enough to get me what I need.

Dynamic Commands in PowerShell

I have also talked before about how PowerShell lets you by dynamic in ways that are remarkably easy.

For instance, you can invoke an arbitrary command with arbitrary arguments with a command object (from `Get-Command`), and a hashtable of parameter/argument mappings simply using `& $cmd @params`.

That's crazy easy. Maybe I've missed that kind of functionality in other languages and it's been there, but I don't think so. At least not often.

I had also seen that the following work fine:

$hash=@{A=1;B=1}

No PowerShell Goals for 2018

After three years (2015, 2016, 2017) of publishing yearly goals, I've decided to not do that this year.

One reason is that I've not done a great job of keeping these goals in the forefront of my mind, so they haven't (for the most part) been achieved.

I definitely fell off the wagon a few times in terms of keeping up with regular posting here. 27 posts last year, so about one every 2 weeks. I'd like to get to where I'm posting twice per week.

I did not work on any new projects (writing, video course, etc.) throughout the year.

In 2017 I've been working on:

- VisioBot3000 - now in the PSGallery

- Southwest Missouri PowerShell User Group (SWMOPSUG) - meeting since June

- Speaking at other regional groups (STL and NWA)

Recently (mostly in 2018), I've also been working on:

- PowerShell Hosting

- WPF in PowerShell (without XAML)

I'm going to try to get back on the ball and post twice a week. Weekly goals rather than yearly...that way if I mess up a week, I can still succeed the next one. :-)

Mike

Visio Constants in VisioBot3000

One of the great things about doing Office automation (that is, COM automation of Office apps) is that all of the examples are filled with tons of references to constants. A goal of VisioBot3000 was to make using those constants as easy as possible.

I mentioned the issue of having so many constants to deal with in a post over 18 months ago, but haven't ever gotten around to showing how VisioBot3000 gives you access to some (most?) of the Visio constants.

First, here's a snippet of code from that post:

$connector.CellsSRC(1,23,10) = 16

Get-Learning : Why PowerShell?

As the first installment in this series, I want to go back to the topic I wrote on in my very first blog post back in 2009. In that post, I talked about why PowerShell (1.0) was something that I was interested enough in to start blogging.

Many of the points I mentioned there are still relevant, so I'll repeat them now. Here are some of the things that made PowerShell awesome to me in 2009:

- Ability to work with multiple technologies in a seamless fashion (.NET, WMI, AD, COM)

- Dynamic code for quick scripting, strongly-typed code for production code (what Bruce Payette calls “type-promiscuous”)

- High-level language constructs (functions, objects)

- Consistent syntax

- Interactive environment (REPL loop)

- Discoverable properties/functions/etc.

- Great variety of delivered cmdlets, even greater variety of community cmdlets and scripts

- On a similar note, a fantastic community that shares results and research

- Extensible type system

- Everything is an object

- Powerful (free) tools like PowerGUI, PSCX, PowerShell WMI Explorer, PowerTab, PrimalForms Community Edition, and many, many more. (ok...I don't use any of these anymore)

- Easy embedding in .NET apps including custom hosts.

- The most stable, well-thought out version 1.0 product I’ve ever seen MicroSoft produce.

- An extremely involved, encouraging community..

Of those things, the only ones that aren't very relevant are the "free tools" (those tools aren't relevant, but there are a lot of other new, free ones), and the 1.0 comment.

Since it's been almost 11 years now, instead of talking about 1.0, let's talk about now.

Microsoft, has placed PowerShell at the focus of its automation strategy. Instead of being an powerful tool which has a passionate community, it now is a central tool behind nearly everything that is managed on the Windows platform. And given the imminent release of PowerShell core, it will soon be (officially) available on OSX and Linux to provide some cross-platform functionality for those who want it. In 2009 you could leverage PowerShell to get more stuff done. Now, in 2017 you can't get much done without touching PowerShell.

Finally, PowerShell is a part of so many solutions now, including most (all?) of the management UIs and APIs coming out of Microsoft in the last several years. Microsoft is relying on PowerShell to be a significant part of their products. Other companies are doing the same, delivering PowerShell modules along with their products. They do this because it is a proven system for powerful automation.

Why PowerShell? Because it's awesome.

Why PowerShell? Because it's everywhere.

Why PowerShell? Because it's proven.

And my final point, which hasn't changed since I talked about it in 2009 is that PowerShell is fun!

Are you looking to start your PowerShell learning journey? Maybe you have already started and are looking for pointers. Perhaps you've got quite a bit of experience and you just want to fill in some gaps.

Follow along with me and get-learning!

--Mike

Get-Learning - Introducing a new series of PowerShell Posts

I've been blogging here since 2009. In that time, I've tried to focus on surprising topics, or at least topics that were things I had recently learned or encountered.

One big problem with that approach is that it makes it much more difficult to produce content.

I really enjoy writing, and I'm teaching PowerShell very frequently (a bit less than 10% of my time at work) so I'm in contact with basic PowerShell topics all the time.

With that in mind, I'm going to start writing PowerShell posts that are more geared towards beginning scripters.

The series, for which I'll be creating an "index page", will be called Get-Learning. I hope to write at least 2 or 3 posts in this series each week for the next several months.

If you have any suggestions for topics, drop me a line.

For now, though, watch this space.

--Mike

Calling Extension Methods in PowerShell

A quick one because it's Friday night.

I recently found myself translating some C# code into PowerShell. If you've done this, you know that most of it is really routine. Change the order of some things, change the operators, drop the semicolons.

In a few places you have to do some adjusting, like changing using scopes into try/finally with .Dispose() in the finally.

But all of that is pretty straightforward.

Then I ran into a method that wasn't showing up in the tab-completion. I hit the dot, and it wasn't in the list.

I had found....and extension method!

Extension Methods

In C# (and other managed languages, I guess), an extension method is a static method of a class whose first parameter is declared with the keyword this.

For instance,

[csharp]

public static class MyExtClass {

public static int NumberOfEs (thisstring TheString)

{

return TheString.Length-TheString.Replace ("e", "").Length;

}

}

[/csharp]

Calling this method in C# goes like this: "hello".NumberOfEs().

It looks like this method (which is in the class MyExtClass is actually a string method with no parameters.

Extension Methods in PowerShell

Unfortunately, PowerShell doesn't do that magic for you. In PowerShell, you call it just like it's written, a static method of a different class.

So, in PowerShell, we would do the following:

$code=@'

[MyExtClass]::NumberOfEs('hello')Deciphering PowerShell Syntax Help Expressions

In my last post I showed several instances of the syntax help that you get when you use get-help or -? with a cmdlet.

For instance:

This help is showing how the different parameters can be used when calling the cmdlet.

If you've never paid any attention to these, the notation can be difficult to work out. Fortunately, it's not that hard. There are only NNN different possibilities. In the following, I will be referring to a parameter called Foo, of type [BAR].

- An optional parameter that can be used by position or name:

[[-Foo] <Bar>]

- An optional parameter that can only be used by name:

[-Foo <bar>]

- A required parameter that can be used by position or name:

[-Foo] <Bar>

- An optional parameter that can be used only by name:

-Foo <Bar>

- A switch parameter (switches are always optional and can only be used by name)

[-Foo]

[-Foo <Switchparameter>] #odd, but you may see this in the help sometimes

So, in the example above we see that we have

- parm1, which is a parameter of type Object (i.e. no type specified), is optional and can be used by name or position

- parm2, which is a parameter of type Object, is optional and can only be used by name

- parm3, which is a parameter of type Object, is optional and can only be used by name

- parm4, which is a parameter of type Object, is optional and can only be used by name

With some practice, you will be reading more complex syntax examples like a pro.

Let me know if this helps!

--Mike

Specifying PowerShell Parameter Position

Positional Parameters

Whether you know it or not, if you've used PowerShell, you've used positional parameters. In the following command the argument (c:\temp) is passed to the -Path parameter by position.

cd c:\temp

The other option for passing a parameter would be to pass it by name like this:

cd -path c:\temp

It makes sense for some commands to allow you to pass things by position rather than by name, especially in cases where there would be little confusion if the names of the parameters are left out (as in this example).

What confuses me, however, is code that looks like this:

function Test-Position{

Missing the Point with PowerShell Error Handling

I've been using PowerShell for about 10 years now. Some might think that 10 years makes me an expert. I know that it really means I have more opportunities to learn. One thing that has occurred to me in the last 4 or 5 months is that I've been missing the point with PowerShell error handling.

PowerShell Error Handling 101

First, PowerShell has try/catch/finally, like most imperative languages have in the last 15 years or so. At first glance, there's not much to see. I usually give an example that looks something like this:

try {

Lots of Recent User Group Activity!

There has been a lot of PowerShell activity in Missouri lately.

I started the Southwest Missouri PSUG in June and have had 4 successful meetings covering the following topics:

- June - organizational

- July - Error Handling

- August - Pester

- September - DSC

I also spoke at the St. Louis PSUG in August (on Error Handling). Ken Maglio spoke in September on accessing Web services (especially RESTful services)

I was privileged to speak at the Springfield .NET UG last week and gave a "developer's overview of PowerShell". BTW, it's hard for me to try to sum up PowerShell and only talk for an hour. Had a great time, though.

Coming in December, I will be speaking at the Northwest Arkansas Developers Group (PowerShell-related topic TBD).

And, as an exciting addition, the Kansas City PSUG had their first meeting in September! I hope to be able to get up that way for a meeting or two before the year is out.

I really enjoy the energy and enthusiasm that I see in all of these groups and love to speak or listen to talented speakers in the community.

--Mike

Celebrating Fake Internet Points in the PowerShell Community

This week, I (finally) hit 10,000 points on StackOverflow. On some level, I know it's just fake internet points, but it's a nice milestone.

Like everyone I know in IT, I often find useful answers to questions I have on StackOverflow. Since there are so many answered questions on that site, I generally don't even need to ask the question, just search for it instead.

When I talk to people about StackOverflow, I always mention the awesome PowerShell presence there. Usually, if you ask a "good" question, you will have lots of people competing to quickly provide answers that are not only correct, but are also informative and helpful. I'm constantly amazed by the character of the PowerShell community. We're all about getting things done and sharing what we use to succeed with others. I'm proud to be a part of this wonderful community.

And that brings me to the part of this "celebration" that isn't fake.

In addition to this number:

You will also see this statistic:

That means (by StackOverflow's calculations, at least), that almost a million people have viewed my answers (and questions). That's a bit overwhelming. I can't tell you how many times I talk to people about PowerShell and they tell me that they've used one of my answers. A million people, though is more than I can fathom.

For what it's worth, I'm going to keep on writing, teaching, answering, and speaking about PowerShell. Maybe I'll hit 2 million.

--Mike

P.S. When I speak about StackOverflow, I also mean to include ServerFault, which is the sysadmin-oriented site in the same family. PowerShell questions pop up on both, but more often on the significantly more popular StackOverflow.

SWMO PSUG August Meetup - 8/1/2017 at the eFactory

This will be our third meeting!

I will be talking about Pester, primarily, but I will undoubtedly stray into poshspec, OVF, Watchman and maybe something else I find between now and then.

If you're in the area, we'd love to see you.

--Mike

Voodoo PowerShell - VisioBot3000 Lives Again!

I wrote a post about how VisioBot3000 had been broken for a while, and my attempts to debug and/or diagnose the problem.

I wrote a post about how VisioBot3000 had been broken for a while, and my attempts to debug and/or diagnose the problem.

In the process of developing a minimal example that illustrated the "breakage", I noticed that accessing certain Visio object properties caused the code to work, even if the values of those properties were not used at all.

It's been almost six months now, and I have no idea why that code makes any difference. So instead of letting VisioBot3000 die, I decided to take the easy route, and incorporate the "nonsense" code in the VisioBot3000 module.

If you look at the latest commit (as of this writing), the New-VisioContainer function (in VisioContainer.ps1) starts with the following single line of nonsense:

[void]$script:Visio.ActiveDocument.Pages[1]

In that code, I'm using a module-level reference to the Visio application, getting the active document from it, and retrieving the first page. And then I'm throwing away the reference that I just retrieved. The only thing that I can imagine is doing anything is the Pages[1] call. It's possible that the COM object is doing something internally in addition to pulling back the first page, but that's grasping at straws.

And that's why I call this Voodoo PowerShell. I'm using code that I don't understand because I get what I want from it. It's a meaningless ritual. I hate including it, but I hate that the module has been largely unchanged for a year even worse.

I will be trying to make more regular updates to VisioBot3000 in the near future, and will be presenting on it at the second SWMO PSUG meeting scheduled for next week.

Let me know what your thoughts are.

--Mike

Get-Command, Aliases, and a Bug

I stumbled across some interesting behavior the other day as I was demonstrating something that I understand pretty well.

[Side note...this is a great way to find out things that you don't know...confidently explain how something works, and demo it.]

I was asked to give an overview of how modules work in PowerShell. I've been writing and using modules since PowerShell 2.0 came out (2009?) so I didn't think there was anything (at least anything basic) that I wasn't comfortable with. Not to say that there aren't module concepts I'm not super-clear on, but the basics should have been all worked out.

After explaining the concepts of modules (encapsulating functions, variables, aliases) and showing how PowerShell knows where to look for modules, I turned to an example module I had written.

I won't replicate that module here, because the contents don't really matter. I've boiled the "weirdness" into a simple example and it looks like this:

function Get-Thing{

2 PowerShell Features I was Surprised to Love

After talking about features I don't want to talk about anymore I thought I would turn my attention to a couple of things in PowerShell that I initially felt were mistakes but have had a change of heart about.

For the most part, I think the PowerShell team does a fantastic job in terms of language design. They have made some bold choices in a few places, but time and time again their choices seem to me like the correct choices.

The two features I'm talking about today were things that, when I first heard about then, I thought "I'll never use that". Time has shown me that my reactions were in haste.

Module Auto-loading

I really like to be explicit about what I'm doing when I write a script. I like explicitly importing modules into a script. Knowing where the cmdlets used in a script come from is a big part of the learning process. As you read scripts (you do read scripts, don't you?), you can slowly expand your knowledge base as you start looking into functionality implemented in different modules. Another big advantage to explicitly importing modules into a script is that you're helping to define the set of dependencies of the script. "Oh, I need to have the SQLServer module installed to run this script...I thought it looked like a SQLPS script!". Since cmdlets can have similar names, explicitly loading the module can make it clear what's going on.

When I saw that PowerShell 3.0 introduced module auto-loading the first thing I thought was "I wonder how I can turn that off", followed closely by "I'm always going to turn that off on every system I use".

I hadn't met PowerShell 3.0 yet, though. The number of cmdlets jumped from several hundred to over two thousand. Knowing what cmdlets came from which modules became a much harder problem. There were so many more cmdlets (aided by cdxml modules) that keeping track was difficult.

Module auto-loading was a logical solution to the "too many modules and cmdlets" problem. I find myself depending on it almost every time I write a script.

I do like to explicitly import modules (either with import-module or via the module manifest) if I'm using something unusual, though.

Collection Properties

I don't know if there's an official name for this feature. Bruce Payette in PowerShell in Action calls this a "fallback dot operator". The idea is that you can use dot-notation against a collection to retrieve a collection of properties of the objects in the collection. Since that was probably as hard to read as it was to write, here's an example:

$filenames = (dir C:\temp).FullName

Clearly, an Array doesn't have a FullName property, right? And we already had 2 ways (the "old" way and the "aha" way) to do this:

$filenames = dir c:\temp | foreach-object {$_.FullName}

Generating All Case Combinations in PowerShell

At work, a software package that I'm dealing with requires that lists of file extensions for whitelisting or blacklisting be case-sensitive. I'm not sure why this is the case (no pun intended), but it is not the only piece of software that I've used with this issue.

What that means is that if you want to block .EXE files, you need to include 8 different variations of EXE (exe, exE, eXe, eXE, Exe, ExE, EXe, EXE). It wasn't too hard to come up with those, but what about ps1xml? 64 variations.

For fun, I wrote a small PowerShell function to generate a list of the different possibilities. It does this by looking at all of the binary numbers with the same number of bits as the extension, interpreting a 0 as lower-case and 1 as upper case.

Here it is:

function Get-ExtensionCases{

Hyper-V HomeLab Intro

So I've been playing with Hyper-V for a while now. If you recall it was one of my 2016 goals to build a virtualization lab.

I've done that, building out the base Microsoft Test Lab Guide several times:

- Manually (clicking in the GUI)

- Using PowerShell commands (contained in the guides)

- Using Lability and PS-AutoLab-Env

I was also fortunate enough to be a technical development editor for Learn Hyper-V in a Month of Lunches, which should be released this fall.

One thing that I've found is that being able to spin up a VM quickly is really nice. With the Hyper-V cmdlets, that's pretty easy.

Spinning up a machine from scratch and building a bootable image is not as easy. Fortunately there are some tools to help.

In this post, I'm going to share a simple function I've written to help me get things built faster.

The goal of the function is to take the following information:

- Which ISO to use

- Which edition from the ISO to select

- The Name of the VM (and VHDX)

- How much memory

- How many CPUs

With that information, it converts the windows image from the ISO to a VHDX, creates a VM with the right specs and using the VHDX, sets up the networking (or starts to, anyway), and starts the VM.

The bulk of the interesting work is done by Convert-WindowsImage, a function that pulls the correct image from an ISO and creates a virtual disk.

There are some problems with that script (read the Q&A on the Technet site and you'll see what I mean). The main one is when it tries to find the edition you ask for (by number or name). The code is in lines 4087-4095, and should look like this:

$Edition | ForEach-Object -Process {

$Edtn = $PSItem

if ([Int32]::TryParse($Edtn, [ref]$null)) {

PowerShell Topics I'm Ready to Stop Talking About

Part of me wants to know every bit of PowerShell there is. I know that's true about me, so I don't have much of an input filter. If the content is PowerShell-related, I'm interested.

When it comes to sharing, however, there's clearly got to be a point at which I shouldn't be talking about something. Here are a few items that I've spoken or taught about that I think are going to get pulled from my routine.

- The TRAP statement

- Obscure Operators

- Filters

- Tee-Object

- (bonus) Workflows

Let's go through them one by one and see why. And yes, I know that I'm talking about them, but this should be the last time (and this time I mean it).

The TRAP statement

The trap statement is the error handling statement that made the cut for v1.0 of PowerShell. If you weren't a PowerShell user at that time you probably haven't ever used it, favoring TRY/CATCH/FINALLY.

Instead of being a block-structured statement like TRY, TRAP worked in a scope, and functioned like a VB ON ERROR GOTO. The rules for program flow after a TRAP statement (which I've long forgotten) made understanding code that used TRAP into....a trap.

The advice I have given students in the past is, "If you stumble upon some code that uses TRAP, look for other code."

Obscure Operators

PowerShell has a lot of operators, and that's a good thing. On the other hand, I'm not sure why I need to tell people about every single operator. Some of the operators, though, are obscure enough that I haven't used them in any language more than a handful of times in the last thirty years. Candidates for expulsion (from discussion, not from the language) include:

- -SHL, -SHR (I guess someone does bitwise shifting, but I haven't ever needed this except in machine language)

- *=, /=, %= (I can see what these do, but I don't ever do much arithmetic so don't find the need for these "shorthand" operators)

Filters

Filters are another PowerShell 1.0 topic. They are one of the ways to use the pipeline for input without using advanced functions and parameter attributes. They're pretty slick, but are easily replaced with an advanced function with a process block. In the last 5 years, I've only seen filters used once (by Rob Campbell at a user group meeting).

Tee-Object

I generally consider the -Object cmdlets to be the backbone of PowerShell. They allow you to deal with pipeline objects "once-and-for-all" and not write a bunch of plumbing code in every function. For that reason, I like to talk about all of them. Tee-Object, however, might get sent to an appendix, because I don't see anyone using it and don't use it myself. This one might be changing as we see (being optimistic) people with more Linux backgrounds submitting PowerShell code. They use tee, right? I find that the -outvariable common parameter serves most of the need I would have for Tee-Object, so, it makes this list.

And finally,

Workflows

Workflows sound awesome. When you talk about workflows you get to use adjectives like "robust", and "resilient". And don't get me wrong Foreach-Object -Parallel is pretty sweet.

On the other hand, writing PowerShell in the workflow-subset of PowerShell is tricky. Remembering what needs to be an inlinescript and how to use/access variables in each kind of block is not fun.

I haven't ever used workflows for anything interesting, and have only heard a few examples of them being used by coworkers. Those examples could mostly be summed up by "I needed parallel".

It won't be hard for me to stop talking about workflows, as I've never really talked about them.

Before I get flamed because I included/excluded your favorite topic, these are just for me. If you like one of these, sell it! You might convince me to change my mind. Is there something that you think should fade away? Let me know what it is. I might be able to change your mind.

--Mike

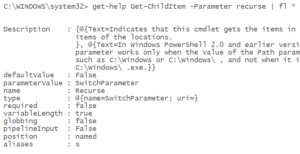

An Unexpected Parameter Alias

I've always said that if you want to learn something really well, teach it to someone. I've been doing internal PowerShell training for several years at my company. I'm very grateful for the opportunity for a number of reasons, but in this post I'm going to call out how something I learned in a recent trip to our San Diego office.

When I'm starting to talk about cmdlets, I usually use get-childitem for the simple reason that almost everyone knows what the DOS DIR command does. It gives us a point of reference to compare and contrast cmdlets with.

I mentioned the -Recurse switch and explained that it was analogous to the /S switch in DIR, but one person in the class didn't quite get the context switch. When he did one of the examples, he tried get-childitem -s. I told him that he needed to use -Recurse, to which he replied "But it works!".

I always keep a pad of paper when I'm teaching so I can write down anything puzzling (it happens in almost every class). When the class took a break, I opened a fresh PowerShell session and tried it.

Of course, it worked.

Now, to determine why it worked.

First of all, I thought that parameter disambiguation would have been a problem. because of the -System parameter. That wasn't a problem.

Then, I realized that the PowerShell team must have included a "legacy alias" for the -Recurse parameter, similar to how they include cmdlet aliases to ease the transition from DOS or *NIX (dir, ls, ps, cat, etc.). I don't think I've ever heard anyone mention legacy aliases for parameters, though.

PowerShell easily verifies that this is the case:

Of course, I verified this on my work computer. As I sit here writing on my home laptop, it didn't list any aliases until I updated help. Blogging is a lot like teaching in that you're bound to find surprises whenever you try to explain something.

Anyway, this was a fun discovery for me.

Can you think of any other parameter aliases that are there for legacy reasons? I might have to try to work up a script to find candidates.

Let me know what you think in the comments.

-Mike

PowerShell Parameter Disambiguation and a Surprise

When you're learning PowerShell one of the first things you will notice is that you don't have to use the full parameter name. This is because of something called parameter disambiguation.

When it works

For instance, instead of saying Get-ChildItem -Recurse, you can say Get-ChildItem -R. Get-ChildItem only has one (non-dynamic) parameter that started with the letter 'R'.. Since only one parameter matches, PowerShell figures you must mean that one. As a side note, dynamic parameters like -ReadOnly are created at run-time and are treated a bit differently.

Here's the error message. Notice that it included a couple of other parameters as possibilities:

AmbiguousParameter error

When it doesn't work

This doesn't always work, though. An easy example is with Get-Service. You can't say Get-Service -In because you haven't specified enough of the parameter name for PowerShell to work out what parameter you meant. With Get-Service, both -Include and -InputObject start with -In, so PowerShell can't tell which of these you meant.

Trying it ourselves

Let's write a quick function to make sure we understand what's going on.

function test-param{

When the PowerShell pipeline doesn't line up

The PowerShell Pipeline

One of the defining features of PowerShell is the object-oriented pipeline. The ability to "wire-up" parameters to the pipeline and allow objects (or properties) to be automatically assigned to them allows us to write code that is often variable-free.

By "variable-free", I mean that instead of doing something like this:

$services=Get-Service *SQL*

Great Books for PowerShell Ideas

I get asked a lot about what PowerShell books people should be reading. The easy answer is, "It depends".

If you're looking for a tutorial book (or two) to get you started with PowerShell, the only answer I give is "Learn PowerShell in a Month of Lunches", followed by "Learn PowerShell Toolmaking in a Month of Lunches". There are other good books in this space (including one I wrote), but these are by far the best I've found.

If you're looking for a reference book, I generally recommend Bruce Payette's "PowerShell in Action". It has a new version coming out soon (april?) and I can hardly wait. Besides that book, "PowerShell in Depth" (by Jones, Hicks, and Siddaway) is also a safe bet.

If you've got the basics of PowerShell down, and are looking for ideas for how to do something, here are some books that aren't mentioned as often, but are indispensible:

- PowerShell Cookbook (Lee Holmes)

- PowerShell Deep Dives (several)

- PowerShell for Developers (Doug Finke)

What are your book recommendations? Did I miss something essential?

-Mike

Some small PowerShell bugs I've found lately

I love PowerShell. Everyone who knows me knows that. Recently, though, I seem to be running into more bugs. I'm not complaining, because PowerShell does tons of amazing things and the problems I'm encountering don't have a huge impact. With that said, here they are.

Pathological properties in Out-GridView

PowerShell has always allowed us to use properties with names that aren't kosher. For instance, we can create an object that has properties with spaces and symbols in the name like this:

$obj=[pscustomobject]@{'test property #1'='hello'}

This capability is essential, since we often find ourselves importing a CSV file that we don't have any control over. (As an exercise, look at the expanded CSV output from schtasks.exe). To access those properties we can use quotes where most languages doesn't like them.

$obj.'test property #1'

Or we can use variables (again, something most languages won't let you do this easily):

$prop='test property #1'; $obj.$prop

A friend called me last week with an interesting issue which turned out to be related to this kind of behavior. He had a SQL query which renamed output columns in "pathological" ways. When he piped the output of the SQL to Out-GridView, the ugly columns showed up in the output, but the columns were empty.

Here's a minimal case to reproduce the issue:

[pscustomobject]@{'test property.'='hello'} | out-gridview

The problem here is that the property name ends with a dot. Here's a UserVoice entry that explains that Out-GridView doesn't like property names that end in whitespace, either. I added a comment about dots for completeness' sake.

Formatting remote Select-String output

Another minor issue I've run into is that deserialized select-string output doesn't format nicely. The issue looks to be that the format.ps1xml for MatchInfo objects uses a custom ToString() method that doesn't survive the serialization. What happens is that you just get blank lines instead of any helpful output. The objects are intact, though, all of the properties are there. So using the output is fine, just that the formatting is broken. Here's a minimal example:

"hello`r`n"*6 | Out-File c:\temp\testFile.txt

February STLPSUG Meeting

I had the privilege of sharing again at the STLPSUG. February's meeting was at Model Technologies, and Jason Rutherford was a great host.

I spoke on being a good citizen on the pipeline, both for output and input. Basically, best practices for pipeline output (which is fairly straight-forward), and techniques for accepting pipeline input (including $input, filters, and parameter attributes).

The group was a bit more advanced than usual, which was cool. There was a lot of fun heckling (I'll give you $5 if you put $input in the process block, for instance) and a lot of participation from everyone.

As usual, after the presentation the talk turned into a giant DevOps discussion.

If you live anywhere near St. Louis and haven't attended one of these meetings, I highly recommend them. Mike Lombardi has done a great job keeping the group moving and focused.

You can find out about upcoming meetings on meetup.com.

P.S. My friend and co-worker Ian was able to come with me this time. Made the drive a lot more fun, and he had a good time, too.

January St. Louis PSUG meeting was a blast!

A couple of weeks ago I had the pleasure to attend another STL PSUG meeting. Mike Lombardi presented on "Getting Started with a Real Problem" and did a great job.

His scenario was someone who didn't really know PowerShell at all and needed to troubleshoot a 3-server web farm where the nodes had different problems.

There were some technical difficulties with his lab setup (he used Lability, which was cool), but he stuck with it and we did all of the fixing in the scenario using a workstation rather than RDP'ing into the nodes.

The recording of the event (which was live-streamed) can be found here.

I will be presenting next month on writing functions that work with the pipeline.

--Mike

Debugging VisioBot3000

The Setup

The Setup

Sometime around late August of 2016, VisioBot3000 stopped working. It was sometime after the Windows 10 anniversary update, and I noticed that when I ran any of the examples in the repo, PowerShell hung whenever it tried to place a container on the page.

I had not made any recent changes to the code. It failed on every box I had.

First attempts at debugging

So...I really get fed up with people who want to blame "external forces" for problems in their code. When I found that none of the examples worked (though they obviously did when I wrote them), I figured that I must have done something stupid.

Hey! I'm using Git! Since I've got a history of 93 commits going back to march, I figured I could isolate the problem.

So...I reverted to a commit a few weeks earlier. And it failed exactly the same way.

Back a few weeks before that. No change.

Back to the first talk I gave at a user group....no change.

I gave up.

For several months.

Reaching out for help

After Thanksgiving, I posted a question on /r/Powershell explaining the situation. I got one reply, suggesting that I watch things in ProcMon while debugging. Seemed like a great thing to do, When I got around to trying it, however, it didn't show anything useful (at least to me...digging through the thousands of lines of output is somewhat difficult).

Making it Simple

Late last year, I thought, I should come up with a minimal verifiable example. Rather than say "all of my code breaks", I should be able to come up with the smallest possible example that breaks. To that end, I wanted to include as little VisioBot3000 code as I could, and show that something's up with Visio's COM interface (or something like that). To that end, I went back to the slides I used when demonstrating Visio automation to the St. Louis PSUG back in March of 2016 and cobbled together an example:

$Visio = New-Object –ComObject Visio.Application

Where I've been for the last few months

As I mentioned in my previous posts, I  kind of fell off the planet (blog-wise, at least) at the end of August. I had good intentions for finishing the year out strong. There were three different items that contributed to my downfall.

kind of fell off the planet (blog-wise, at least) at the end of August. I had good intentions for finishing the year out strong. There were three different items that contributed to my downfall.

First, I've been battling lots of different illnesses (none of them anything major) pretty much continually since early June. For three entire months, I coughed all the time. Right now, I can't hear in one ear because of the fluid backed up there. That ear has only been a problem for a few days, but the other one (which cleared out yesterday) had been full for three weeks. Like I said, nothing major, no life-threatening conditions, but over time it wears you down.

Second, I broke down and bought a server. I have been putting off this purchase, but some book royalty money came through and I pulled the trigger. Buying it didn't take long. What has been interesting is learning to do all of the things that most of you sysadmins take for granted. I've never really been a sysadmin, more of a developer/DBA/toolsmith who happens to really, really like a language which is designed for sysadmins. So, I've been building hyper-v hosts, lots of guests, building domains, joining domains, and trying to script as much as possible. I've learned a lot and there's still a lot to learn. Most of it, though, is not stuff that I'll probably blog about, because it's really basic. There might be a post or 2 coming, but it's hard to say.

Third, and this one is the most "fun", is that VisioBot3000 stopped working. If you haven't read my posts on automating Visio with PowerShell, VisioBot3000 is a module I wrote which allows you (among other things) to define your own PowerShell-based DSL to express diagrams in Visio. By "stopped working" I mean that sometime around the end of August, trying to use Visio containers always caused the code to hang.

I am pretty good at debugging, so I tried the usual tricks. I stepped through the code in the debugger. The line of code that was hanging was pretty innocent-looking. All of the variables had appropriate values. But it caused a hang. On my work laptop and my home laptop...two different OSes. I tried reverting to an old commit...No luck. I even tried copying code out of presentations I had done on VisioBot3000 and the results were the same. I even posted on the PowerShell subreddit asking for ideas on how to debug. The only suggestion was to use SysInternals process monitor to follow the two processes and see if I could find what was causing the issue. I tried that a week or two ago (sometime during the holidays) and guess what? It started working on my work laptop. Still doesn't work on my home laptop, though, or the VM I built and didn't allow to patch to see if a patch was the culprit.

Conclusion: I'm mostly better health-wise, am getting comfortable with the server, and VisioBot3000 is working somewhere, so I should be back on track with rambling about PowerShell.

--Mike

PowerShellStation 2017 Goals

Following up on yesterday's post reviewing my progress on goals from 2016, I thought I'd try to set out some goals for the new year. I'm going to group them into 3 groups: Technology, Community, and Content.

Content Goals

- Write 100 posts. I didn't do so well with this last year, but this year will be different. I'm not sure why I don't write more often. I enjoy writing and feel good about myself when I do it. I'm going to try to be consistent with it as well, not having several months with no posts.

- Write a book. I've written a couple of books with a publisher (here and here) and I think that was valuable experience. I'm going to try to do it on my own. That should enable me to keep the cost down. I'm also going to try to do it a lot quicker (and maybe shorter) than the other books. BTW...I've already started.

- Write a course. I love to teach PowerShell, and I've got a lot of practice doing it at work. I'm considering recording "lectures" for a course (like on Udemy).

- Edit/Contribute 50 topics on StackOverflow.com's Documentation project for PowerShell. It seems like a reasonable platform for information about PowerShell, and there are already a bunch of topics there ready to be filled in.

Community Goals

- Start a regional PSUG in Southwest Missouri. I live there, so it's silly for me to have to drive 3 hours to go to a user group meeting. I don't intend to stop going to those long-distance meetings altogether, but there are a lot of people in SWMO who don't have a group.

- Continue Speaking. If they'll have me, I plan to continue speaking at local or regional user groups. I'm also looking for "nearby" SQL Saturdays, PowerShell Saturdays, or other settings.

- Continue the UG and teaching at work. This one is pretty easy, but I don't want to get distracted and let these fall apart.

Technology Goals

- Get handy with DSC and/or Chef. I'm still scripting virtualization/provisioning "manually" (i.e. scripting the steps I'd do manually) rather than using a system to do that for me. I wanted to do it that way so I would understand what goes on, but now that I know, I want to be out of that business. DSC is almost certain to be part of the equation. Chef might be, but that's an open question (also, Packer, Vagrant, Ansible, etc.)

- Deploy operational tests with Pester/PoshSpec/OVF. I see a lot of promise with these, but everything is single-machine focused. Something like this looks like a good start, but needs some flexibility.

- Nano, Containers, (flavor of the month). This one is kind of a wildcard. These two (nano and containers) are new technology solutions that I understand at a surface level, but don't have a good idea why or where I would use them. I'm not sure if I'll dig into one of these two or something else that pops up in the year, but there will be an in-depth project.

Bonus Goal

If I can get good with DSC, I really want to be able to spin up an entire environment from scratch. By that, I mean from scripts (and downloaded ISOs) I want to be able to create a DC (with certificate services) and a DSC pull server, and then deploy the servers for a lab and have them configure themselves via the pull server. For more of a bonus, use the newly created certificate services server to handle the passwords properly in the DSC configs. By the way, I'm aware of Lability and PS-AutoLab-Env. They're both awesome but not quite what I'm looking for here.

Those ought to keep me busy for the year. What are you planning to do/share/learn this year? Write about it and post a link in the comments!

--Mike

PowerShellStation 2016 Goals Review

I did a goal review back on August 22, reporting some good progress on my yearly goals and some plans for the remainder of the year. Somehow, I seemed to have fallen off the earth since then. I only posted twice since

then, and both of those were in the week following the review. I'll be posting this week about what happened (spoiler alert...not much).

In the meantime, here's how I did on my goals for 2016

- 100 posts. I only got to 35. That's kind of embarassing. On the plus side, I had some of my best months in the last year (January - 10 posts, April - 8 posts, August - 7 posts). If I could keep that kind of momentum it would make a lot of difference. On the down side, if you exclude those 3 months I only has 10 posts in the remaining 9 months. That's abysmal.

- Virtualization Lab. In my review I mentioned that the box I bought to do virtualization on was only at 16GB of RAM and I needed to bump it up. Didn't do that. I also mentioned the possibility of buying an R710 off of eBay. Did that. Dual, quad-core cpus, 36GB of RAM, 8TB of storage (so far). I've done more virtualization since I bought it (in October) than I had ever done before. I can definitely say I got this goal accomplished!

- Configure Servers with DSC. Other than the talk I did at MidMo, I haven't really done much DSC this year. Now that I've got a solid lab machine, this is high on the list for 2017.

- PowerShell User Group. I've started a PSUG at work (I work for a sofware company, so there are hundreds of people using PowerShell) and we've had 3 meetings so far. They've mostly been sharing news and what we're working on, but it's a good start. Beginning to form a community there. Also, I attended several (more than a dozen, less than 20) meetings of local-ish PSUGs in Missouri. The two I know of are each a 3-hour drive one way to get to so that's a challenge but they've been great. They both started this year, and I've tried to lend my support as much as I can. I've spoken 6 or 7 times (I didn't keep track) and had a great time at all of the meetings.

- Continue Teaching at Work. Did lots of teaching. I'd have to check the calendar to get a real total, but it was at least 10 days of teaching.

- Share more on GitHub. Really got into Github this year. VisioBot3000, SQLPSX, POSH_Ado, etc. Next step: PowerShellGallery!

- Write more professional scripts. I think this will always be a goal of mine. I've published a couple of checklists and try to be thoughtful about how to write better code as I'm writing it, but I often find myself writing "throwaway" code and cleaning it up later. Need to eliminate as much of that first step as possible.

- Speak. I've spoken at 6 user group meetings this year and at 2 or 3 others in the past. If you've got a UG within driving distance of SW Missouri (KS, NW Arkansas, Oklahoma), let me know...I really enjoy sharing what I'm doing as well as speaking on "general" PowerShell topics. Also, it doesn't need to be a PSUG...I've spoken at .NET and SQL groups as well.

- Encourage. Another perennial task. I haven't been as active in this as I have in the past.

- Digest. (from the goal review)

I get about 10 different paper.li daily digests either in email or on twitter. I don't find a lot of value in them...they don't seem to be curated for the most part, but I think adding another into the fray at this point would probably be lost. I'm going to skip this one this year...but keep it on the back burner.

I've been thinking about maybe doing something slightly different here. Maybe a "module of the month" or "meet a PowerShell person" regular post. Any suggestions?

Well...by my count I accomplished 6 (maybe 7) of the 10 goals from last year. If you haven't thought about what you're going to try to accomplish this year I highly recommend you do. Remember, if you don't know where you're going, you might not like where you end up! A concrete list of goals, shared with friends (or with the public) makes it easy to know if you're achieving your goals or have lost sight of your goals.

--Mike

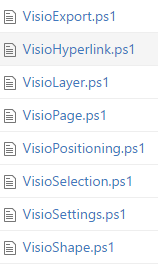

Module Structure Preferences (and my module history)

Modules in the Olden Days

Back in PowerShell 1.0 days, there were no modules. Uphill both ways. In 1.0, we only had script files. That means you had a couple of choices when it came to deploying functions. Either you put a bunch of functions in a .ps1 file and dot-sourced it, or you used parameterized scripts in place of functions. I guess you could have put a function in a .ps1 file, but you'd still need to dot-source it, right? Personally (at work), I had a bunch of .ps1 files, each devoted to a single "subject". I had one that dealt with db access (db_utils), one that interfaced with PeopleSoft (ps_utils), a module for scheduled tasks (schtasks_utils), and so on. You get the idea.

PowerShell 2.0 - The Dawn of Modules

One of the easiest features to love in PowerShell 2.0 was support for modules (although remoting and advanced functions are cool, they're not quite as easy). There's a $PSModulePath pointing to places to put modules in named folders, and in the folders you have .psm1 or .psd1 files. There are other options (like .dll), but for scripts, these are what you run into.

Transitioning into modules for me started easy: I just changed the extensions of the .ps1 files to .psm1. I had written functions (require and reload) which knew where the files were stored, and handled dot-sourcing them. You had to dot-source require and reload, but it was clear what was going on. When modules were introduced, I changed the functions to look for psm1 files and import with Import-Module if they existed, and just carry on as before otherwise.

That's Kind of Gross

Yep. No module manifests, and dozens of .psm1 files in the same folder. To make it worse, I wasn't even using the PSModulePath, because the .psm1 files weren't in proper named folders. The benefit for me was that I didn't have to change any code. I let that go for several years. Finally I broke down and put the files in proper folders and changed the code to stop using the obsolete require/reload functions and use Import-Module directly. I still haven't written module manifests for them. I'm so bad.

What about new modules?

Good question! For new stuff (written after 2.0 was introduced), I started with the same module structure: single .psm1 file with a bunch of functions in it. Probably put an Export-ModuleMember *-* in there to make sure that any helper functions don't "leak", but that was about it. To be fair, I didn't do a lot of module writing for quite a while, so this wasn't a real hindrance.

Is there a problem with that?

No...there's no problem with having a simple .psm1 script module containing functions. At least from a technical standpoint. Adding a module manifest is nice because you can document dependencies and speed up intellisense by listing public functions, but that's extra.

The problem came when I wrote a module with a bunch of functions. VisioBot3000 isn't huge, but it has 38 functions so far. At one point, the .psm1 file was over 1300 lines long. That's too much scrolling and searching in the file to be useful in my opinion.

What's the recommended solution?

I've seen several posts recommending that each function should be broken out into a single .ps1 file and the .psm1 file should dot-source them all. That definitely gets past the problem of having a big file. But in my mind it creates a different problem. The module directory (or sub-folder where the .ps1 files live) gets really big and it takes some work to find things. Lots of opening and closing of files. And the dot-sourcing operation isn't free...it takes time to dot-source a large set of files. Not a showstopper, but noticeable.

My tentative approach

How I've started organizing my modules is similar to how I organized "modules" in the 1.0 era. Back then, each file was subject-specific. In VisioBot3000, I split the functions out based on noun.

I still have relatively short source files, but now each file generally has a get/set pair, and if other functions use the same noun they're there too.

I've found that I often end up editing several functions in the same file to address issues, enhancements, etc. I think it makes sense from a discoverability standpoint as well. If I was looking at the source, I'd find functions which were related in the same file, rather than having to look through the directory for files with similar filenames.

Anyway, it's what I'm doing. You might be writing all scripts (no functions) and liking that. More power to you.

Let me know what you think.

--Mike

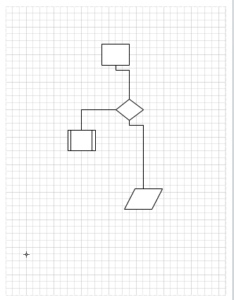

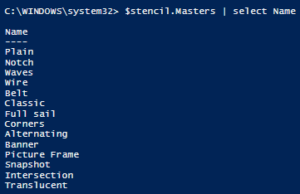

VisioBot3000 Settings Import

It's been a while since I last spoke about VisioBot3000. I've got the project to a reasonably stable point...not quite feature complete but I don't see a lot of big changes.

One of the things I found even as I wrote sample diagram scripts was that quite a bit of the script was taken up by things that wold probably be done exactly the same way in most diagrams. For instance, if you're doing a lot of server diagrams, you will probably be using the exact same stencils and the same shapes on those stencils, with the same nicknames. Doing so makes it a lot easier to write your diagram scripts because you're developing a "diagram language" which you're familiar with.

For reference, here's an example script from the project (without some "clean up" at the beginning):

Diagram C:\temp\TestVisio3.vsdx

August 2016 Goal Review

Thought I'd take a minute and review the progress on my goals for the year.

- 100 posts. I'm at 32 (33 if you count this) so it's going to take some dedication to make it. It's already 10 more than last year, so that's progress, but with 19 weeks left and 68 posts left, I'm going to have to beat 3 per week. I'm going to try. Might have to set a schedule (which would really help, but I'm not really wired that way).

- Virtualization Lab. I've got a couple of boxes running Hyper-V (client Hyper-V, but with nested virtualization I can get a lot closer). I've been playing with building servers, sysprep, differencing disks, etc. I feel like I've pretty much got this one covered. Need to jump the big box up to 32GB though. Thinking about getting a R710 off of eBay for a "next step" on this...maybe next year.

- Configure Servers with DSC. I've done some work with DSC both at home and at work, and gave a talk on DSC at the MidMO PSUG this month. Feel good about this, too.

- PowerShell User Group. First meeting at work is two days from now! I've also been to about a dozen meetings in Missouri (and posting about some of them). I've been honored to speak at 6 meetings so far as well, so this one is good. Bonus: I've started paperwork for reserving meeting space in the town I work (rather than 3 hours away), but haven't scheduled anything yet.

- Continue Teaching at Work. I might not get 10 sessions in, but I think I'm already to 7. Good on this one.