I’ve been using PowerShell for about 10 years now. Some might think that 10 years makes me an expert. I know that it really means I have more opportunities to learn. One thing that has occurred to me in the last 4 or 5 months is that I’ve been missing the point with PowerShell error handling.

PowerShell Error Handling 101

First, PowerShell has try/catch/finally, like most imperative languages have in the last 15 years or so. At first glance, there’s not much to see. I usually give an example that looks something like this:

try {

#do something here

$x=1/0

write-verbose 'it worked'

} catch {

$err=$_

write-verbose "An error happened : $err"

} finally {

write-verbose 'Time to clean up'

}

Running that script with $VerbosePreference set to Continue would output

VERBOSE: An error happened : Attempted to divide by zero.

VERBOSE: Time to clean up

At this point in the explanation, most people with a development background of any kind is likely nodding their head.

And now for something completely different

The next example shows that all is not as expected:

try {

$results=get-wmiobject -class Win32_ComputerSystem -computername Localhost,NOSUCHCOMPUTER

} catch {

$err=$_

write-verbose "An error happened : $err"

}

Most people are surprised to see red error text on the screen and the nice message nowhere to be found.

Anyone with much experience with PowerShell knows that some (most?) PowerShell cmdlets output error records (not exceptions) in some cases, and that try/catch doesn’t “catch” these error records. In PowerShell parlance, exceptions are terminating errors, and error records are non-terminating errors.

My explanation for the why the PowerShell team created non-terminating errors is this:

Imagine you managed a farm of 1000 computers. What would be the odds of all 1000 of them responding correctly to a get-wmiobject call? If anyone in the class optimistically says anything other than “slim to none”, up the number to 10,000 and repeat.

With standard “programming semantics” (i.e. exceptions, terminating errors), a call to 1000 computers which failed on any of them would immediately throw an exception and leave the try block. At that point, all positive results are lost.

As a datacenter manager, is that how you want your automation engine to work? I don’t think so.

With non-terminating errors, the correct results are returned from the cmdlet and error records are output to the error stream. The error stream can be inspected to see what went wrong, and you still get the output.

Where I missed the point

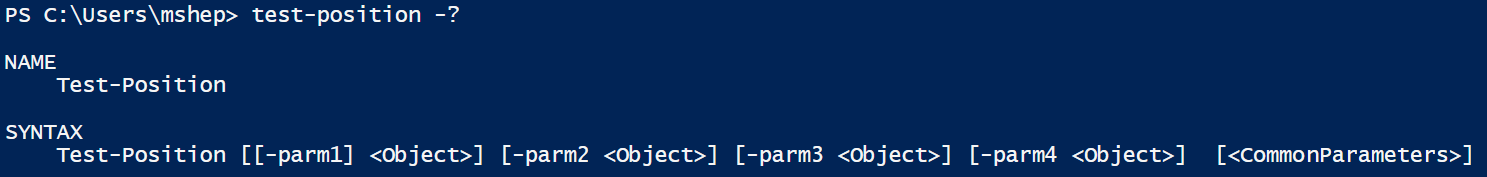

What I’ve been teaching (and I’m not alone) is that the solution is to use the -ErrorAction common parameter to cause the non-terminating error to be a terminating error. That means that we can use try/catch, but it also means that we need to introduce a loop.

Adding the try/catch and -ErrorAction, it looks something like this:

$results=$()

foreach($comp in $computers){

try {

$results+=get-wmiobject -class Win32_ComputerSystem -computername $comp -ErrorAction Stop

catch{

$err=$_

write-verbose "Something went wrong with $comp : $err"

}

}

Before saying anything else, let me say this…it works.

Unfortunately, it misses the point.

An aside

If you ever find yourself writing code that sidesteps something that the PowerShell team put in place, you should take a step back and see if you’re doing the right thing. The PowerShell team is really, really smart, so if you’re working around them, you probably missed the point (like I did).

Why this is missing the point

One thing that people often miss about PowerShell cmdlets is how often they let you pass lists as arguments. The -ComputerName parameter is one such place. By passing a list of computers to Get-WMIObject, you let PowerShell execute the command against all of those computers “at the same time”. There is overhead, and it’s not multi-threaded, but since most of the work is being done on other machines, you really do get a huge performance increase. It might take five times longer to hit 100 machines than a single machine, but it won’t be anything like 100 times slower.

By introducing a loop, we’ve guaranteed that the time it takes will be at least 100 times as long, because each cmdlet execution is being done in sequence. Using an array (or list) as the argument would allow most of the work to be done more or less in parallel.

That’s not to mention the fact that now we’ve taken on the responsibility of adding the individual results into a collection. Not a big deal, but anywhere you write more code is a place to have more bugs.

So what’s the right way to do this?

In my opinion, a much better way to do this kind of activity would be to continue to pass the list, but use the -ErrorAction and -ErrorVariable parameters in conjunction to get the best of both worlds. It would look something like this:

try {

$results=get-wmiobject -class Win32_ComputerSystem -computername $computers -ErrorAction SilentlyContinue -ErrorVariable Problems

catch{

$err=$_

write-verbose "Something went wrong : $err"

}

foreach($errorRecord in $Problems){

write-verbose "An error occurred here : $errorRecord"

}

With this construction, we’re only calling get-wmiobject once, so we get the speed of parallel execution. By using -ErrorAction SilentlyContinue, we won’t have any error records (non-terminating errors) written to the error stream. That means, no red text in our output. By the way, SilentlyContinue will write the error records to the $Error automatic variable. If you don’t want that, you can use -ErrorAction Ignore instead.

The “key” to making this technique work is -ErrorVariable Problems. This collects all of the non-terminating errors output by the command, and puts them in the variable $Problems (remember to leave the $ off when using -ErrorVariable). Since I have those in a variable, I can loop through them after I get the results and do whatever I need to with them.

Finally (no pun intended), I put the cmdlet call in a try/catch in case it throws an exception (for instance, out of memory).

So, to summarize, I get the speed of only calling the cmdlet once, and I also get to do something with the errors on an individual basis.

I’m sure someone in the community is teaching this pattern, but I don’t remember seeing it.

What do you think?

–Mike

Why WMI instead of CIM?

I use Get-WMIObject (even though WMI cmldets are deprecated) for a couple of reasons. First, my work environment doesn’t have WinRM enabled on our laptops by default. I teach error handling before remoting, so at this point using CIM cmdlets with -ComputerName causes errors that are even harder to explain. Also, my first memorable exposure to non-terminating errors was with WMI cmdlets.

One unfortunate problem with using WMI cmdlets is that the error records that it emits do not contain the offending computername. I filed an issue in the appropriate place, but was told that it was too late for WMI cmdlets. Once the general principle of non-terminating errors is understood, substituting CIM cmdlets is an easy sell. Also, it’s a good reason for people to make the switch.